?生产环境有一个Redis会大发源码出售QQ2952777280【话仙源码论坛】hxforum.com 偶尔发生连接失败的报错,报错的时间点、客户端IP并没有特别明显的规律,过一会儿,报错会自动恢复。?以下是客户端报错信息:CRedis.C...

?生产环境有一个Redis会大发源码出售QQ2952777280【话仙源码论坛】hxforum.com 偶尔发生连接失败的报错,报错的时间点、客户端IP并没有特别明显的规律,过一会儿,报错会自动恢复。

?以下是客户端报错信息:

CRedis.Client.RExceptions.ExcuteCommandException: Unable to Connect redis server: ---> CRedis.Third.Redis.RedisException: Unable to Connect redis server:

在 CRedis.Third.Redis.RedisNativeClient.CreateConnectionError()

在 CRedis.Third.Redis.RedisNativeClient.SendExpectData(Byte[][] cmdWithBinaryArgs)

在 CRedis.Client.Entities.RedisServer.<>c__DisplayClassd`1.

?从报错的信息来看,应该是连接不上Redis所致。Redis的版本是2.8.19。虽然版本有点老,但基本运行稳定。

?线上环境只有这个集群有偶尔报错。这个集群的一个比较明显的特征是客户端服务器比较多,有上百台。

【问题分析】

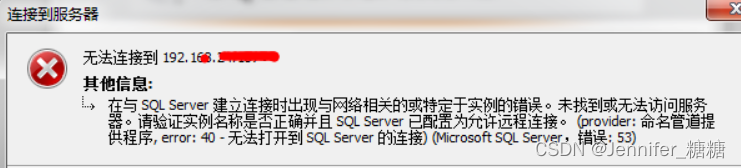

?从报错的信息来看,客户端连接不到服务端。常见的原因有以下几点:

一个常见的原因是由于端口耗尽,对网络连接进行排查,在出问题的点上,TCP连接数远没有达到端口耗尽的场景,因此这个不是Redis连接不上的根本原因。

另外一种常见的场景是在服务端有慢查询,导致Redis服务阻塞。我们在Redis服务端,把运行超过10毫秒的语句进行抓取,也没有抓到运行慢的语句。

?从服务端的部署的监控来看,出问题的点上,连接数有一个突然飙升,从3500个连接突然飙升至4100个连接。如下图显示:

同时间,服务器端显示Redis服务端有丢包现象:345539 – 344683 = 856个包。

Sat Apr 7 10:41:40 CST 2018

1699 outgoing packets dropped

92 dropped because of missing route

344683 SYNs to LISTEN sockets dropped

344683 times the listen queue of a socket overflowed

Sat Apr 7 10:41:41 CST 2018

1699 outgoing packets dropped

92 dropped because of missing route

345539 SYNs to LISTEN sockets dropped

345539 times the listen queue of a socket overflowed

?客户端报错的原因基本确定,是因为建连速度太快,导致服务端backlog队列溢出,连接被server端reset。

【关于backlog overflow】

?在高并发的短连接服务中,这是一种很常见的tcp报错类型。一个正常的tcp建连过程如下:

?1.client发送一个(SYN)给server

?2.server返回一个(SYN,ACK)给client

?3.client返回一个(ACK)

?三次握手结束,对client来说建连成功,client可以继续发送数据包给server,但是这个时候server端未必ready,如下图所示 :

在BSD版本内核实现的tcp协议中,server端建连过程需要两个队列,一个是SYN queue,一个是accept queue。前者叫半开连接(或者半连接)队列,在接收到client发送的SYN时加入队列。(一种常见的网络***方式就是不断发送SYN但是不发送ACK从而导致server端的半开队列撑爆,server端拒绝服务。)后者叫全连接队列,server返回(SYN,ACK),在接收到client发送ACK后(此时client会认为建连已经完成,会开始发送PSH包),如果accept queue没有满,那么server从SYN queue把连接信息移到accept queue;如果此时accept queue溢出的话,server的行为要看配置。如果tcp_abort_on_overflow为0(默认),那么直接drop掉client发送的PSH包,此时client会进入重发过程,一段时间后server端重新发送SYN,ACK,重新从建连的第二步开始;如果tcp_abort_on_overflow为1,那么server端发现accept queue满之后直接发送reset。

通过wireshark搜索发现在一秒内有超过2000次对Redis Server端发起建连请求。我们尝试修改tcp backlog大小,从511调整到2048, 问题并没有得到解决。所以此类微调,并不能彻底的解决问题。

【网络包分析】

我们用wireshark来识别网络拥塞的准确时间点和原因。我们已经有了准确的报错时间点,先用editcap把超大的tcp包裁剪一下,裁成30秒间隔,并通过wireshark I/O 100ms间隔分析网络阻塞的准确时间点:

?根据图标可以明显看到tcp的packets来往存在block。

?对该block前后的网络包进行明细分析,网络包来往情况如下:

Time Source Dest Description

??12:01:54.6536050?? ??Redis-Server?? ??Clients?? ??TCP:Flags=…AP…

??12:01:54.6538580?? ??Redis-Server?? ??Clients?? ??TCP:Flags=…AP…

??12:01:54.6539770?? ??Redis-Server?? ??Clients?? ??TCP:Flags=…AP…

??12:01:54.6720580?? ??Redis-Server?? ??Clients?? ??TCP:Flags=…A..S..

??12:01:54.6727200?? ??Redis-Server?? ??Clients?? ??TCP:Flags=…A……

??12:01:54.6808480?? ??Redis-Server?? ??Clients?? ??TCP:Flags=…AP…..

??12:01:54.6910840?? ??Redis-Server?? ??Clients?? ??TCP:Flags=…A…S.,

??12:01:54.6911950?? ??Redis-Server?? ??Clients?? ??TCP:Flags=…A……

??… ?? ??… ?? ?? … ?? ?? …

??12:01:56.1181350?? ??Redis-Server?? ??Clients?? ??TCP:Flags=…AP….

12:01:54.6808480, Redis Server端向客户端发送了一个Push包,也就是对于查询请求的一个结果返回。后面的包都是在做连接处理,包括Ack包,Ack确认包,以及重置的RST包,紧接着下面一个Push包是在12:01:56.1181350发出的。中间的间隔是1.4372870秒。也就是说,在这1.4372870秒期间,Redis的服务器端,除了做一个查询,其他的操作都是在做建连,或拒绝连接。

客户端报错的前后逻辑已经清楚了,redis-server卡了1.43秒,client的connection pool被打满,疯狂新建连接,server的accept queue满,直接拒绝服务,client报错。开始怀疑client发送了特殊命令,这时需要确认一下client的最后几个命令是什么,找到redis-server卡死前的第一个包,装一个wireshark的redis插件,看到最后几个命令是简单的get,并且key-value都很小,不至于需要耗费1.43秒才能完成。服务端也没有slow log,此时排障再次陷入僵局。

【进一步分析】

为了了解这1.43秒之内,Redis Server在做什么事情,我们用pstack来抓取信息。Pstack本质上是gdb attach. 高频率的抓取会影响redis的吞吐。死循环0.5秒一次无脑抓,在redis-server卡死的时候抓到堆栈如下(过滤了没用的栈信息):

Thu May 31 11:29:18 CST 2018

Thread 1 (Thread 0x7ff2db6de720 (LWP 8378)):

#0 0x000000000048cec4 in ?? ()

#1 0x00000000004914a4 in je_arena_ralloc ()

#2 0x00000000004836a1 in je_realloc ()

#3 0x0000000000422cc5 in zrealloc ()

#4 0x00000000004213d7 in sdsRemoveFreeSpace ()

#5 0x000000000041ef3c in clientsCronResizeQueryBuffer ()

#6 0x00000000004205de in clientsCron ()

#7 0x0000000000420784 in serverCron ()

#8 0x0000000000418542 in aeProcessEvents ()

#9 0x000000000041873b in aeMain ()

#10 0x0000000000420fce in main ()

Thu May 31 11:29:19 CST 2018

Thread 1 (Thread 0x7ff2db6de720 (LWP 8378)):

#0 0x0000003729ee5407 in madvise () from /lib64/libc.so.6

#1 0x0000000000493a4e in je_pages_purge ()

#2 0x000000000048cf70 in ?? ()

#3 0x00000000004914a4 in je_arena_ralloc ()

#4 0x00000000004836a1 in je_realloc ()

#5 0x0000000000422cc5 in zrealloc ()

#6 0x00000000004213d7 in sdsRemoveFreeSpace ()

#7 0x000000000041ef3c in clientsCronResizeQueryBuffer ()

#8 0x00000000004205de in clientsCron ()

#9 0x0000000000420784 in serverCron ()

#10 0x0000000000418542 in aeProcessEvents ()

#11 0x000000000041873b in aeMain ()

#12 0x0000000000420fce in main ()

Thu May 31 11:29:19 CST 2018

Thread 1 (Thread 0x7ff2db6de720 (LWP 8378)):

#0 0x000000000048108c in je_malloc_usable_size ()

#1 0x0000000000422be6 in zmalloc ()

#2 0x00000000004220bc in sdsnewlen ()

#3 0x000000000042c409 in createStringObject ()

#4 0x000000000042918e in processMultibulkBuffer ()

#5 0x0000000000429662 in processInputBuffer ()

#6 0x0000000000429762 in readQueryFromClient ()

#7 0x000000000041847c in aeProcessEvents ()

#8 0x000000000041873b in aeMain ()

#9 0x0000000000420fce in main ()

Thu May 31 11:29:20 CST 2018

Thread 1 (Thread 0x7ff2db6de720 (LWP 8378)):

#0 0x000000372a60e7cd in write () from /lib64/libpthread.so.0

#1 0x0000000000428833 in sendReplyToClient ()

#2 0x0000000000418435 in aeProcessEvents ()

#3 0x000000000041873b in aeMain ()

#4 0x0000000000420fce in main ()

重复多次抓取后,从堆栈中发现可疑堆栈clientsCronResizeQueryBuffer位置,属于serverCron()函数下,这个redis-server内部的定时调度,并不在用户线程下,这个解释了为什么卡死的时候没有出现慢查询。

查看redis源码,确认到底redis-server在做什么:

clientsCron(server.h):

#define CLIENTS_CRON_MIN_ITERATIONS 5

void clientsCron(void) {

/* Make sure to process at least numclients/server.hz of clients

- per call. Since this function is called server.hz times per second

- we are sure that in the worst case we process all the clients in 1

-

second. */

int numclients = listLength(server.clients);

int iterations = numclients/server.hz;

mstime_t now = mstime();/* Process at least a few clients while we are at it, even if we need

- to process less than CLIENTS_CRON_MIN_ITERATIONS to meet our contract

-

of processing each client once per second. */

if (iterations < CLIENTS_CRON_MIN_ITERATIONS)

iterations = (numclients < CLIENTS_CRON_MIN_ITERATIONS) ?

numclients : CLIENTS_CRON_MIN_ITERATIONS;while(listLength(server.clients) && iterations--) {

client c;

listNode head;/* Rotate the list, take the current head, process. * This way if the client must be removed from the list it's the * first element and we don't incur into O(N) computation. */ listRotate(server.clients); head = listFirst(server.clients); c = listNodeValue(head); /* The following functions do different service checks on the client. * The protocol is that they return non-zero if the client was * terminated. */ if (clientsCronHandleTimeout(c,now)) continue; if (clientsCronResizeQueryBuffer(c)) continue;}

}

clientsCron首先判断当前client的数量,用于控制一次清理连接的数量,生产服务器单实例的连接数量在5000不到,也就是一次清理的连接数是50个。

clientsCronResizeQueryBuffer(server.h):

/* The client query buffer is an sds.c string that can end with a lot of

- free space not used, this function reclaims space if needed.

-

The function always returns 0 as it never terminates the client. /

int clientsCronResizeQueryBuffer(client c) {

size_t querybuf_size = sdsAllocSize(c->querybuf);

time_t idletime = server.unixtime - c->lastinteraction;/* 只在以下两种情况下会Resize query buffer:

- 1) Query buffer > BIG_ARG(在server.h 中定义#define PROTO_MBULK_BIG_ARG (1024*32))

且这个Buffer的小于一段时间的客户端使用的峰值. - 2) 客户端空闲超过2s且Buffer size大于1k. /

if (((querybuf_size > PROTO_MBULK_BIG_ARG) &&

(querybuf_size/(c->querybuf_peak+1)) > 2) ||

(querybuf_size > 1024 && idletime > 2))

{

/ Only resize the query buffer if it is actually wasting space. /

if (sdsavail(c->querybuf) > 1024) {

c->querybuf = sdsRemoveFreeSpace(c->querybuf);

}

}

/ Reset the peak again to capture the peak memory usage in the next - cycle. */

c->querybuf_peak = 0;

return 0;

}

如果redisClient对象的query buffer满足条件,那么就直接resize掉。满足条件的连接分成两种,一种是真的很大的,比该客户端一段时间内使用的峰值还大;还有一种是很闲(idle>2)的,这两种都要满足一个条件,就是buffer free的部分超过1k。那么redis-server卡住的原因就是正好有那么50个很大的或者空闲的并且free size超过了1k大小连接的同时循环做了resize,由于redis都属于单线程工作的程序,所以block了client。那么解决这个问题办法就很明朗了,让resize 的频率变低或者resize的执行速度变快。

- 1) Query buffer > BIG_ARG(在server.h 中定义#define PROTO_MBULK_BIG_ARG (1024*32))

既然问题出在query buffer上,我们先看一下这个东西被修改的位置:

readQueryFromClient(networking.c):

redisClient createClient(int fd) {

redisClient c = zmalloc(sizeof(redisClient));

/* passing -1 as fd it is possible to create a non connected client.

* This is useful since all the Redis commands needs to be executed

* in the context of a client. When commands are executed in other

* contexts (for instance a Lua script) we need a non connected client. */

if (fd != -1) {

anetNonBlock(NULL,fd);

anetEnableTcpNoDelay(NULL,fd);

if (server.tcpkeepalive)

anetKeepAlive(NULL,fd,server.tcpkeepalive);

if (aeCreateFileEvent(server.el,fd,AE_READABLE,

readQueryFromClient, c) == AE_ERR)

{

close(fd);

zfree(c);

return NULL;

}

}

selectDb(c,0);

c->id = server.next_client_id++;

c->fd = fd;

c->name = NULL;

c->bufpos = 0;

c->querybuf = sdsempty(); 初始化是0readQueryFromClient(networking.c):

void readQueryFromClient(aeEventLoop el, int fd, void privdata, int mask) {

redisClient c = (redisClient) privdata;

int nread, readlen;

size_t qblen;

REDIS_NOTUSED(el);

REDIS_NOTUSED(mask);

server.current_client = c;

readlen = REDIS_IOBUF_LEN;

/* If this is a multi bulk request, and we are processing a bulk reply

* that is large enough, try to maximize the probability that the query

* buffer contains exactly the SDS string representing the object, even

* at the risk of requiring more read(2) calls. This way the function

* processMultiBulkBuffer() can avoid copying buffers to create the

* Redis Object representing the argument. */

if (c->reqtype == REDIS_REQ_MULTIBULK && c->multibulklen && c->bulklen != -1

&& c->bulklen >= REDIS_MBULK_BIG_ARG)

{

int remaining = (unsigned)(c->bulklen+2)-sdslen(c->querybuf);

if (remaining < readlen) readlen = remaining;

}

qblen = sdslen(c->querybuf);

if (c->querybuf_peak < qblen) c->querybuf_peak = qblen;

c->querybuf = sdsMakeRoomFor(c->querybuf, readlen); 在这里会被扩大由此可见c->querybuf在连接第一次读取命令后的大小就会被分配至少102432,所以回过头再去看resize的清理逻辑就明显存在问题,每个被使用到的query buffer的大小至少就是102432,但是清理的时候判断条件是>1024,也就是说,所有的idle>2的被使用过的连接都会被resize掉,下次接收到请求的时候再重新分配到1024*32,这个其实是没有必要的,在访问比较频繁的群集,内存会被频繁得回收重分配,所以我们尝试将清理的判断条件改造为如下,就可以避免大部分没有必要的resize操作:

if (((querybuf_size > REDIS_MBULK_BIG_ARG) &&

(querybuf_size/(c->querybuf_peak+1)) > 2) ||

(querybuf_size > 102432 && idletime > 2))

{

/ Only resize the query buffer if it is actually wasting space. /

if (sdsavail(c->querybuf) > 102432) {

c->querybuf = sdsRemoveFreeSpace(c->querybuf);

}

}

这个改造的副作用是内存的开销,按照一个实例5k连接计算,5000102432=160M,这点内存消耗对于上百G内存的服务器完全可以接受。

【问题重现】

在使用修改过源码的Redis server后,问题仍然重现了,客户端还是会报同类型的错误,且报错的时候,服务器内存依然会出现抖动。抓取内存堆栈信息如下:

Thu Jun 14 21:56:54 CST 2018

#3 0x0000003729ee893d in clone () from /lib64/libc.so.6

Thread 1 (Thread 0x7f2dc108d720 (LWP 27851)):

#0 0x0000003729ee5400 in madvise () from /lib64/libc.so.6

#1 0x0000000000493a1e in je_pages_purge ()

#2 0x000000000048cf40 in arena_purge ()

#3 0x00000000004a7dad in je_tcache_bin_flush_large ()

#4 0x00000000004a85e9 in je_tcache_event_hard ()

#5 0x000000000042c0b5 in decrRefCount ()

#6 0x000000000042744d in resetClient ()

#7 0x000000000042963b in processInputBuffer ()

#8 0x0000000000429762 in readQueryFromClient ()

#9 0x000000000041847c in aeProcessEvents ()

#10 0x000000000041873b in aeMain ()

#11 0x0000000000420fce in main ()

Thu Jun 14 21:56:54 CST 2018

Thread 1 (Thread 0x7f2dc108d720 (LWP 27851)):

#0 0x0000003729ee5400 in madvise () from /lib64/libc.so.6

#1 0x0000000000493a1e in je_pages_purge ()

#2 0x000000000048cf40 in arena_purge ()

#3 0x00000000004a7dad in je_tcache_bin_flush_large ()

#4 0x00000000004a85e9 in je_tcache_event_hard ()

#5 0x000000000042c0b5 in decrRefCount ()

#6 0x000000000042744d in resetClient ()

#7 0x000000000042963b in processInputBuffer ()

#8 0x0000000000429762 in readQueryFromClient ()

#9 0x000000000041847c in aeProcessEvents ()

#10 0x000000000041873b in aeMain ()

#11 0x0000000000420fce in main ()

显然,Querybuffer被频繁resize的问题已经得到了优化,但是还是会出现客户端报错。这就又陷入了僵局。难道还有其他因素导致query buffer resize变慢?我们再次抓取pstack。但这时,jemalloc引起了我们的注意。此时回想Redis的内存分配机制,Redis为避免libc内存不被释放导致大量内存碎片的问题,默认使用的是jemalloc用作内存分配管理,这次报错的堆栈信息中都是je_pages_purge () redis在调用jemalloc回收脏页。我们看下jemalloc做了些什么:

arena_purge(arena.c)

static void

arena_purge(arena_t arena, bool all)

{

arena_chunk_t chunk;

size_t npurgatory;

if (config_debug) {

size_t ndirty = 0;

arena_chunk_dirty_iter(&arena->chunks_dirty, NULL,

chunks_dirty_iter_cb, (void *)&ndirty);

assert(ndirty == arena->ndirty);

}

assert(arena->ndirty > arena->npurgatory || all);

assert((arena->nactive >> opt_lg_dirty_mult) < (arena->ndirty -

arena->npurgatory) || all);

if (config_stats)

arena->stats.npurge++;

npurgatory = arena_compute_npurgatory(arena, all);

arena->npurgatory += npurgatory;

while (npurgatory > 0) {

size_t npurgeable, npurged, nunpurged;

/* Get next chunk with dirty pages. */

chunk = arena_chunk_dirty_first(&arena->chunks_dirty);

if (chunk == NULL) {

arena->npurgatory -= npurgatory;

return;

}

npurgeable = chunk->ndirty;

assert(npurgeable != 0);

if (npurgeable > npurgatory && chunk->nruns_adjac == 0) {

arena->npurgatory += npurgeable - npurgatory;

npurgatory = npurgeable;

}

arena->npurgatory -= npurgeable;

npurgatory -= npurgeable;

npurged = arena_chunk_purge(arena, chunk, all);

nunpurged = npurgeable - npurged;

arena->npurgatory += nunpurged;

npurgatory += nunpurged;

}}

Jemalloc每次回收都会判断所有实际应该清理的chunck并对清理做count,这个操作对于高响应要求的系统是很奢侈的,所以我们考虑通过升级jemalloc的版本来优化purge的性能。Redis 4.0版本发布后,性能有很大的改进,并可以通过命令回收内存,我们线上也正准备进行升级,跟随4.0发布的jemalloc版本为4.1,jemalloc的版本使用的在jemalloc的4.0之后版本的arena_purge()做了很多优化,去掉了计数器的调用,简化了很多判断逻辑,增加了arena_stash_dirty()方法合并了之前的计算和判断逻辑,增加了purge_runs_sentinel,用保持脏块在每个arena LRU中的方式替代之前的保持脏块在arena树的dirty-run-containing chunck中的方式,大幅度减少了脏块purge的体积,并且在内存回收过程中不再移动内存块。代码如下:

arena_purge(arena.c)

static void

arena_purge(arena_t *arena, bool all)

{

chunk_hooks_t chunk_hooks = chunk_hooks_get(arena);

size_t npurge, npurgeable, npurged;

arena_runs_dirty_link_t purge_runs_sentinel;

extent_node_t purge_chunks_sentinel;

arena->purging = true;

/*

* Calls to arena_dirty_count() are disabled even for debug builds

* because overhead grows nonlinearly as memory usage increases.

*/

if (false && config_debug) {

size_t ndirty = arena_dirty_count(arena);

assert(ndirty == arena->ndirty);

}

assert((arena->nactive >> arena->lg_dirty_mult) < arena->ndirty || all);

if (config_stats)

arena->stats.npurge++;

npurge = arena_compute_npurge(arena, all);

qr_new(&purge_runs_sentinel, rd_link);

extent_node_dirty_linkage_init(&purge_chunks_sentinel);

npurgeable = arena_stash_dirty(arena, &chunk_hooks, all, npurge,

&purge_runs_sentinel, &purge_chunks_sentinel);

assert(npurgeable >= npurge);

npurged = arena_purge_stashed(arena, &chunk_hooks, &purge_runs_sentinel,

&purge_chunks_sentinel);

assert(npurged == npurgeable);

arena_unstash_purged(arena, &chunk_hooks, &purge_runs_sentinel,

&purge_chunks_sentinel);

arena->purging = false;}

【解决问题】

实际上我们有多个选项。可以使用Google的tcmalloc来代替jemalloc,可以升级jemalloc的版本等等。我们根据上面的分析,尝试通过升级jemalloc版本,实际操作为升级Redis版本来解决。我们将Redis的版本升级到4.0.9之后观察,线上客户端连接超时这个棘手的问题得到了解决。

【问题总结】

Redis在生产环境中因其支持高并发,响应快,易操作被广泛使用,对于运维人员而言,其响应时间的要求带来了各种各样的问题,Redis的连接超时问题是其中比较典型的一种,从发现问题,客户端连接超时,到通过抓取客户端与服务端的网络包,内存堆栈定位问题,也被其中一些假象所迷惑,最终通过升级jemalloc(Redis)的版本解决问题,这次最值得总结和借鉴的是整个分析的思路。

本文标题为:Redis偶发连接大发源码出售失败案例分析

- Oracle 删除大量表记录操作分析总结 2023-07-23

- Numpy中如何创建矩阵并等间隔抽取数据 2023-07-28

- 搭建单机Redis缓存服务的实现 2023-07-13

- 在阿里云CentOS 6.8上安装Redis 2023-09-12

- SQLSERVER调用C#的代码实现 2023-07-29

- Mongodb启动报错完美解决方案:about to fork child process,waiting until server is ready for connections. 2023-07-16

- SQL Server 2022 AlwaysOn新特性之包含可用性组详解 2023-07-29

- 基于Python制作一个简单的文章搜索工具 2023-07-28

- MySQL8.0.28安装教程详细图解(windows 64位) 2023-07-26

- redis清除数据 2023-09-13