这篇文章主要为大家介绍了k8s部署redis集群搭建过程示例详解,有需要的朋友可以借鉴参考下,希望能够有所帮助,祝大家多多进步,早日升职加薪

写在前面

在上一篇文章中,我们已经做到了已经创建好6个redis副本了。

具体的详情,可以查看这里:k8s部署redis集群(一)

那么接下来,我们就继续实现redis集群的搭建过程。

一、redis集群搭建

1.1使用redis-cli创建集群

# 查看redis的pod对应的ip

kubectl get pod -n jxbp -o wide

>NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

redis-0 1/1 Running 0 18h 10.168.235.196 k8s-master <none> <none>

redis-1 1/1 Running 0 18h 10.168.235.225 k8s-master <none> <none>

redis-2 1/1 Running 0 18h 10.168.235.239 k8s-master <none> <none>

redis-3 1/1 Running 0 18h 10.168.235.198 k8s-master <none> <none>

redis-4 1/1 Running 0 18h 10.168.235.222 k8s-master <none> <none>

redis-5 1/1 Running 0 18h 10.168.235.238 k8s-master <none> <none>

# 进入到redis-0容器

kubectl exec -it redis-0 /bin/bash -n jxbp

# 创建master节点(redis-0、redis-2、redis-4)

redis-cli --cluster create 10.168.235.196:6379 10.168.235.239:6379 10.168.235.222:6379 -a jxbd

> Warning: Using a password with '-a' or '-u' option on the command line interface may not be safe.

>>> Performing hash slots allocation on 3 nodes...

Master[0] -> Slots 0 - 5460

Master[1] -> Slots 5461 - 10922

Master[2] -> Slots 10923 - 16383

M: bcae187137a9b30d7dab8fe0d8ed4a46c6e39638 10.168.235.196:6379

slots:[0-5460] (5461 slots) master

M: 4367e4a45e557406a3112e7b79f82a44d4ce485e 10.168.235.239:6379

slots:[5461-10922] (5462 slots) master

M: a2cec159bbe2efa11a8f60287b90927bcb214729 10.168.235.222:6379

slots:[10923-16383] (5461 slots) master

Can I set the above configuration? (type 'yes' to accept): yes

>>> Nodes configuration updated

>>> Assign a different config epoch to each node

>>> Sending CLUSTER MEET messages to join the cluster

Waiting for the cluster to join

.

>>> Performing Cluster Check (using node 10.168.235.196:6379)

M: bcae187137a9b30d7dab8fe0d8ed4a46c6e39638 10.168.235.196:6379

slots:[0-5460] (5461 slots) master

M: a2cec159bbe2efa11a8f60287b90927bcb214729 10.168.235.222:6379

slots:[10923-16383] (5461 slots) master

M: 4367e4a45e557406a3112e7b79f82a44d4ce485e 10.168.235.239:6379

slots:[5461-10922] (5462 slots) master

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

注意上面的master节点,会生成对应节点id:bcae187137a9b30d7dab8fe0d8ed4a46c6e39638、a2cec159bbe2efa11a8f60287b90927bcb214729、4367e4a45e557406a3112e7b79f82a44d4ce485e,用于创建slave节点。

# 为每个master节点添加slave节点

# 10.168.235.196:6379的位置可以是任意一个master节点,一般我们用第一个master节点即redis-0的ip地址

# --cluster-master-id参数指定该salve节点对应的master节点的id

# -a参数指定redis的密码

# redis-0的master节点,添加redis-1为slave节点

redis-cli --cluster add-node 10.168.235.225:6379 10.168.235.196:6379 --cluster-slave --cluster-master-id bcae187137a9b30d7dab8fe0d8ed4a46c6e39638 -a jxbd

# redis-2的master节点,添加redis-3为slave节点

redis-cli --cluster add-node 10.168.235.198:6379 10.168.235.239:6379 --cluster-slave --cluster-master-id a2cec159bbe2efa11a8f60287b90927bcb214729 -a jxbd

# redis-4的master节点,添加redis-5为slave节点

redis-cli --cluster add-node 10.168.233.238:6379 10.168.235.222:6379 --cluster-slave --cluster-master-id 4367e4a45e557406a3112e7b79f82a44d4ce485e -a jxbd

显示以下信息,即为添加成功:

[OK] All nodes agree about slots configuration.

[OK] All 16384 slots covered.

[OK] New node added correctly.

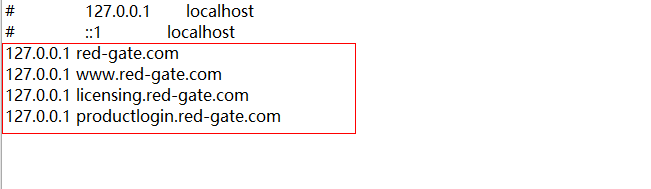

坑:

一开始是想用headless的域名创建redis集群的,这样节点重启后就不需要更新ip,但是redis不支持使用域名,所以只能绕了一圈又回到固定ip的方法,和容器环境很不协调。

1.2redis集群状态验证(可选)

- cluster info

# 进入到redis客户端,集群需要带上-c,有密码需要带上-a

redis-cli -c -a jxbd

# 查看redis集群信息

127.0.0.1:6379> cluster info

cluster_state:ok

cluster_slots_assigned:16384

cluster_slots_ok:16384

cluster_slots_pfail:0

cluster_slots_fail:0

cluster_known_nodes:6

cluster_size:3

cluster_current_epoch:3

cluster_my_epoch:1

cluster_stats_messages_ping_sent:7996

cluster_stats_messages_pong_sent:7713

cluster_stats_messages_sent:15709

cluster_stats_messages_ping_received:7710

cluster_stats_messages_pong_received:7996

cluster_stats_messages_meet_received:3

cluster_stats_messages_received:15709

注意:

现在进入集群中的任意一个Pod中都可以访问Redis服务,前面我们创建了一个headless类型的Service,kubernetes集群会为该服务分配一个DNS记录,格式为:$(pod.name).$(headless server.name).${namespace}.svc.cluster.local,每次访问该服务名时,将会直接进入到redis的节点上。svc.cluster.local可省略。 例如:

redis-cli -c -a jxbd -h redis-0.redis-hs.jxbp -p 6379

- cluster nodes

# 查看redis集群状态

127.0.0.1:6379> cluster nodes

70220b45e978d0cb3df19b07e55d883b49f4127d 10.168.235.238:6379@16379 slave 4367e4a45e557406a3112e7b79f82a44d4ce485e 0 1670306292673 2 connected

122b89a51a9bf005e3d47b6d721c65621d2e9a75 10.168.235.225:6379@16379 slave bcae187137a9b30d7dab8fe0d8ed4a46c6e39638 0 1670306290558 1 connected

c2afcb9e83038a47d04bf328ead8033788548234 10.168.235.198:6379@16379 slave a2cec159bbe2efa11a8f60287b90927bcb214729 0 1670306291162 3 connected

4367e4a45e557406a3112e7b79f82a44d4ce485e 10.168.235.239:6379@16379 master - 0 1670306291561 2 connected 5461-10922

bcae187137a9b30d7dab8fe0d8ed4a46c6e39638 10.168.235.196:6379@16379 myself,master - 0 1670306291000 1 connected 0-5460

a2cec159bbe2efa11a8f60287b90927bcb214729 10.168.235.222:6379@16379 master - 0 1670306292166 3 connected 10923-16383

可以看到3个master,3个slave节点,都是connected状态。

- get,set验证

# 会找到对应的槽进行set操作,去到10.168.235.222节点

set name1 llsydn

-> Redirected to slot [12933] located at 10.168.235.222:6379

OK

# set name1成功

10.168.235.222:6379> set name1 llsydn

OK

# get name1成功

10.168.235.222:6379> get name1

"llsydn"

master节点进行set操作,slave节点复制。主从复制

1.3重启pod,验证集群(可选)

# redis-1未重启之前

10.168.235.239:6379> cluster nodes

4367e4a45e557406a3112e7b79f82a44d4ce485e 10.168.235.239:6379@16379 myself,master - 0 1670307319000 2 connected 5461-10922

bcae187137a9b30d7dab8fe0d8ed4a46c6e39638 10.168.235.196:6379@16379 master - 0 1670307319575 1 connected 0-5460

70220b45e978d0cb3df19b07e55d883b49f4127d 10.168.235.238:6379@16379 slave 4367e4a45e557406a3112e7b79f82a44d4ce485e 0 1670307318000 2 connected

122b89a51a9bf005e3d47b6d721c65621d2e9a75 10.168.235.225:6379@16379 slave bcae187137a9b30d7dab8fe0d8ed4a46c6e39638 0 1670307319781 1 connected

c2afcb9e83038a47d04bf328ead8033788548234 10.168.235.198:6379@16379 slave a2cec159bbe2efa11a8f60287b90927bcb214729 0 1670307319071 3 connected

a2cec159bbe2efa11a8f60287b90927bcb214729 10.168.235.222:6379@16379 master - 0 1670307318000 3 connected 10923-16383

# 重启redis-1

kubectl delete pod redis-1 -n jxbp

pod "redis-1" deleted

# redis-1重启之后

10.168.235.239:6379> cluster nodes

4367e4a45e557406a3112e7b79f82a44d4ce485e 10.168.235.239:6379@16379 myself,master - 0 1670307349000 2 connected 5461-10922

bcae187137a9b30d7dab8fe0d8ed4a46c6e39638 10.168.235.196:6379@16379 master - 0 1670307349988 1 connected 0-5460

70220b45e978d0cb3df19b07e55d883b49f4127d 10.168.235.238:6379@16379 slave 4367e4a45e557406a3112e7b79f82a44d4ce485e 0 1670307349000 2 connected

122b89a51a9bf005e3d47b6d721c65621d2e9a75 10.168.235.232:6379@16379 slave bcae187137a9b30d7dab8fe0d8ed4a46c6e39638 0 1670307350089 1 connected

c2afcb9e83038a47d04bf328ead8033788548234 10.168.235.198:6379@16379 slave a2cec159bbe2efa11a8f60287b90927bcb214729 0 1670307350000 3 connected

a2cec159bbe2efa11a8f60287b90927bcb214729 10.168.235.222:6379@16379 master - 0 1670307348000 3 connected 10923-16383

可以看到重启后的,redis-1节点,虽然ip变了,但是redis集群,还是可以识别到新的ip,集群还是正常的。

10.168.235.225 ---> 10.168.235.232

1.4创建Service服务

前面我们创建了用于实现StatefulSet的Headless Service,但该Service没有Cluster Ip,因此不能用于外界访问。所以,我们还需要创建一个Service,专用于为Redis集群提供访问和负载均衡。

这里可以使用ClusterIP,NodePort。这里,我使用的是NodePort。

vi redis-ss.yaml

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s.kuboard.cn/layer: db

k8s.kuboard.cn/name: redis

name: redis-ss

namespace: jxbp

spec:

ports:

- name: imdgss

port: 6379

protocol: TCP

targetPort: 6379

nodePort: 6379

selector:

k8s.kuboard.cn/layer: db

k8s.kuboard.cn/name: redis

type: NodePort

创建名称为:redis-ss的服务。

在K8S集群中暴露6379端口,并且会对labels name为k8s.kuboard.cn/name: redis的pod进行负载均衡。

然后在K8S集群中,就可以通过redis-ss:6379,对redis集群进行访问。

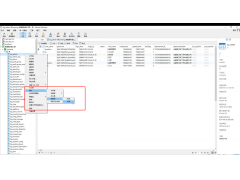

kubectl get service -n jxbp

>NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

redis-hs ClusterIP None <none> 6379/TCP 76m

redis-ss NodePort 10.96.54.201 <none> 6379:6379/TCP 2s

1.5 Springboot项目配置

spring.redis.cluster.nodes=redis-ss:6379

1.6相关疑问分析

至此,大家可能会疑惑,那为什么没有使用稳定的标志,Redis Pod也能正常进行故障转移呢?这涉及了Redis本身的机制。因为,Redis集群中每个节点都有自己的NodeId(保存在自动生成的nodes.conf中),并且该NodeId不会随着IP的变化和变化,这其实也是一种固定的网络标志。也就是说,就算某个Redis Pod重启了,该Pod依然会加载保存的NodeId来维持自己的身份。我们可以在NFS上查看redis-0的nodes.conf文件:

vi /opt/nfs/pv1/nodes.conf

> f6d4993467a4ab1f3fa806f1122edd39f6466394 10.168.235.228:6379@16379 slave ebed24c8fca9ebc16ceaaee0c2bc2e3e09f7b2c0 0 1670316449064 2 connected

ebed24c8fca9ebc16ceaaee0c2bc2e3e09f7b2c0 10.168.235.240:6379@16379 myself,master - 0 1670316450000 2 connected 5461-10922

955e1236652c2fcb11f47c20a43149dcd1f1f92b 10.168.235.255:6379@16379 master - 0 1670316449565 1 connected 0-5460

574c40485bb8f6cfaf8618d482efb06f3e323f88 10.168.235.224:6379@16379 slave 955e1236652c2fcb11f47c20a43149dcd1f1f92b 0 1670316449000 1 connected

91bd3dc859ce51f1ed0e7cbd07b13786297bd05b 10.168.235.237:6379@16379 slave fe0b74c5e461aa22d4d782f891b78ddc4306eed4 0 1670316450672 3 connected

fe0b74c5e461aa22d4d782f891b78ddc4306eed4 10.168.235.253:6379@16379 master - 0 1670316450068 3 connected 10923-16383

vars currentEpoch 3 lastVoteEpoch 0

如上,第一列为NodeId,稳定不变;第二列为IP和端口信息,可能会改变。

这里,我们介绍NodeId的两种使用场景:

当某个Slave Pod断线重连后IP改变,但是Master发现其NodeId依旧, 就认为该Slave还是之前的Slave。

当某个Master Pod下线后,集群在其Slave中选举重新的Master。待旧Master上线后,集群发现其NodeId依旧,会让旧Master变成新Master的slave。

对于这两种场景,大家有兴趣的话还可以自行测试,注意要观察Redis的日志。

redis这种有状态的应用到底应不应该使用k8s部署,还是使用外部服务器部署redis集群?

以上就是k8s部署redis集群搭建过程示例详解的详细内容,更多关于k8s部署redis集群的资料请关注编程学习网其它相关文章!

本文标题为:k8s部署redis集群搭建过程示例详解

- Numpy中如何创建矩阵并等间隔抽取数据 2023-07-28

- 在阿里云CentOS 6.8上安装Redis 2023-09-12

- Mongodb启动报错完美解决方案:about to fork child process,waiting until server is ready for connections. 2023-07-16

- 搭建单机Redis缓存服务的实现 2023-07-13

- 基于Python制作一个简单的文章搜索工具 2023-07-28

- SQL Server 2022 AlwaysOn新特性之包含可用性组详解 2023-07-29

- SQLSERVER调用C#的代码实现 2023-07-29

- Oracle 删除大量表记录操作分析总结 2023-07-23

- redis清除数据 2023-09-13

- MySQL8.0.28安装教程详细图解(windows 64位) 2023-07-26