JVM memory usage out of control(JVM 内存使用失控)

问题描述

我有一个 Tomcat 网络应用程序,它代表客户端执行一些漂亮的内存和 CPU 密集型任务.这是正常的,是所需的功能.但是,当我运行 Tomcat 时,内存使用量会随着时间的推移飙升至 4.0GB 以上,此时我通常会终止该进程,因为它会干扰我的开发机器上运行的所有其他东西:

我以为我的代码无意中引入了内存泄漏,但在使用 VisualVM 进行检查后,我看到了不同的情况:

VisualVM 显示堆占用了大约 1 GB 的 RAM,这是我使用 CATALINA_OPTS="-Xms256m -Xmx1024" 设置的.

为什么我的系统认为这个进程占用了大量内存,而根据 VisualVM,它几乎没有占用任何内存?

<小时>在进一步探查之后,我注意到如果多个作业同时在应用程序中运行,内存不会被释放.但是,如果我等待每个作业完成,然后再将另一个作业提交到由 ExecutorService 服务的 BlockingQueue,那么内存将被有效回收.我该如何调试呢?为什么垃圾收集/内存重用会有所不同?

你无法控制你想控制的东西,-Xmx只控制Java Heap,它不控制 JVM 对 本机内存 的消耗,根据实现,其消耗完全不同.VisualVM 仅向您显示堆正在消耗的内容,它不会显示整个 JVM 作为操作系统进程消耗的本机内存.您必须使用操作系统级别的工具才能看到这一点,它们会报告完全不同的数字,通常比 VisualVM 报告的任何数据都大得多,因为 JVM 以完全不同的方式使用本机内存.

来自以下文章感谢内存(了解 JVM 如何在 Windows 和 Linux 上使用本机内存)

维护堆和垃圾收集器使用您无法控制的本机内存.

<块引用>需要更多的本机内存来维持维护 Java 堆的内存管理系统.数据结构必须分配以跟踪空闲存储并记录进度收集垃圾.这些数据结构的确切大小和性质因实施而异,但许多与堆.

JIT 编译器使用本机内存,就像 javac 一样

字节码编译使用本机内存(与静态gcc 等编译器需要内存才能运行),但输入(字节码)和来自 JIT 的输出(可执行代码)也必须存储在本机内存中.Java 应用程序包含许多JIT 编译的方法比较小的应用程序使用更多的本机内存.

然后你就有了使用本机内存的类加载器

<块引用>Java 应用程序由定义对象结构的类组成和方法逻辑.他们还使用 Java 运行时类中的类库(例如 java.lang.String)并且可能使用第三方图书馆.这些类需要在内存中存储多久他们正在被使用.类的存储方式因实现而异.

我什至不会开始引用线程部分,我想你明白了-Xmx 不控制你认为它控制的东西,它控制 JVM 堆,而不是所有东西进入 JVM 堆,堆占用的本机内存比您指定的要多管理和簿记.

简单明了,JVM 使用的内存比 -Xms 和 -Xmx 和其他命令行参数.

这是一个 关于 JVM 如何分配和管理内存的非常详细的文章,它并不像您在问题中的假设所期望的那么简单,值得全面阅读.p>

许多实现中的 ThreadStack 大小都有最小限制,这些限制因操作系统和有时 JVM 版本而异;如果您将限制设置为低于 JVM 或操作系统的本机操作系统限制,则忽略线程堆栈设置(有时必须设置 *nix 上的 ulimit).其他命令行选项的工作方式相同,当提供的值太小时,默认为更高的值.不要假设所有传入的值都代表实际使用的值.

类加载器和 Tomcat 不止一个,它们会占用大量不易记录的内存.JIT 会占用大量内存,以空间换时间,这在大多数情况下是一个很好的权衡.

I have a Tomcat webapp which does some pretty memory and CPU-intensive tasks on the behalf of clients. This is normal and is the desired functionality. However, when I run Tomcat, memory usage skyrockets over time to upwards of 4.0GB at which time I usually kill the process as it's messing with everything else running on my development machine:

I thought I had inadvertently introduced a memory leak with my code, but after checking into it with VisualVM, I'm seeing a different story:

VisualVM is showing the heap as taking up approximately a GB of RAM, which is what I set it to do with CATALINA_OPTS="-Xms256m -Xmx1024".

Why is my system seeing this process as taking up a ton of memory when according to VisualVM, it's taking up hardly any at all?

After a bit of further sniffing around, I'm noticing that if multiple jobs are running simultaneously in the applications, memory does not get freed. However, if I wait for each job to complete before submitting another to my BlockingQueue serviced by an ExecutorService, then memory is recycled effectively. How can I debug this? Why would garbage collection/memory reuse differ?

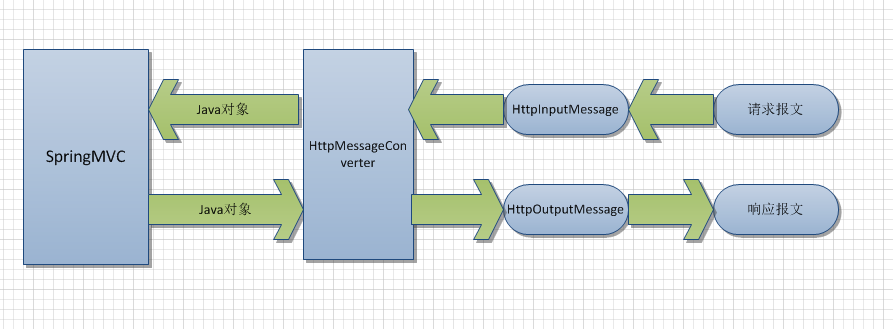

You can't control what you want to control, -Xmx only controls the Java Heap, it doesn't control consumption of native memory by the JVM, which is consumed completely differently based on implementation. VisualVM is only showing you what the Heap is comsuming, it doesn't show what the entire JVM is consuming as native memory as an OS process. You will have to use OS level tools to see that, and they will report radically different numbers, usually much much larger than anything VisualVM reports, because the JVM uses up native memory in an entirely different way.

From the following article Thanks for the Memory ( Understanding How the JVM uses Native Memory on Windows and Linux )

Maintaining the heap and garbage collector use native memory you can't control.

More native memory is required to maintain the state of the memory-management system maintaining the Java heap. Data structures must be allocated to track free storage and record progress when collecting garbage. The exact size and nature of these data structures varies with implementation, but many are proportional to the size of the heap.

and the JIT compiler uses native memory just like javac would

Bytecode compilation uses native memory (in the same way that a static compiler such as gcc requires memory to run), but both the input (the bytecode) and the output (the executable code) from the JIT must also be stored in native memory. Java applications that contain many JIT-compiled methods use more native memory than smaller applications.

and then you have the classloader(s) which use native memory

Java applications are composed of classes that define object structure and method logic. They also use classes from the Java runtime class libraries (such as java.lang.String) and may use third-party libraries. These classes need to be stored in memory for as long as they are being used. How classes are stored varies by implementation.

I won't even start quoting the section on Threads, I think you get the idea that

-Xmx doesn't control what you think it controls, it controls the JVM heap, not everything

goes in the JVM heap, and the heap takes up way more native memory that what you specify for

management and book keeping.

Plain and simple the JVM uses more memory than what is supplied in -Xms and -Xmx and the other command line parameters.

Here is a very detailed article on how the JVM allocates and manages memory, it isn't as simple as what you are expected based on your assumptions in your question, it is well worth a comprehensive read.

ThreadStack size in many implementations have minimum limits that vary by Operating System and sometimes JVM version; the threadstack setting is ignored if you set the limit below the native OS limit for the JVM or the OS ( ulimit on *nix has to be set instead sometimes ). Other command line options work the same way, silently defaulting to higher values when too small values are supplied. Don't assume that all the values passed in represent what are actually used.

The Classloaders, and Tomcat has more than one, eat up lots of memory that isn't documented easily. The JIT eats up a lot of memory, trading space for time, which is a good trade off most of the time.

这篇关于JVM 内存使用失控的文章就介绍到这了,希望我们推荐的答案对大家有所帮助,也希望大家多多支持编程学习网!

本文标题为:JVM 内存使用失控

- 从 finally 块返回时 Java 的奇怪行为 2022-01-01

- Safepoint+stats 日志,输出 JDK12 中没有 vmop 操作 2022-01-01

- 将log4j 1.2配置转换为log4j 2配置 2022-01-01

- 如何使用WebFilter实现授权头检查 2022-01-01

- Jersey REST 客户端:发布多部分数据 2022-01-01

- value & 是什么意思?0xff 在 Java 中做什么? 2022-01-01

- Spring Boot连接到使用仲裁器运行的MongoDB副本集 2022-01-01

- Eclipse 插件更新错误日志在哪里? 2022-01-01

- Java包名称中单词分隔符的约定是什么? 2022-01-01

- C++ 和 Java 进程之间的共享内存 2022-01-01