Do current x86 architectures support non-temporal loads (from quot;normalquot; memory)?(当前的 x86 架构是否支持非临时加载(来自“正常内存)?)

问题描述

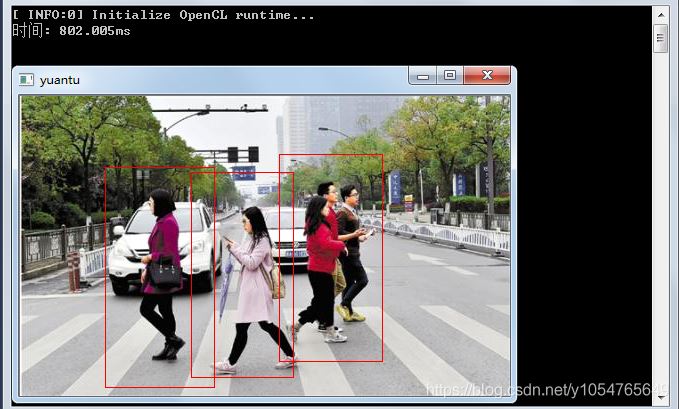

我知道关于这个主题的多个问题,但是,我没有看到任何明确的答案,也没有任何基准测量.因此,我创建了一个处理两个整数数组的简单程序.第一个数组a 非常大(64 MB),第二个数组b 很小,无法放入L1 缓存.程序对a进行迭代,并以模块化的方式将其元素添加到b的对应元素中(当到达b的末尾时,程序重新从头开始).b 不同大小的 L1 缓存未命中数测量如下:

测量是在具有 32 kiB L1 数据缓存的 Xeon E5 2680v3 Haswell 型 CPU 上进行的.因此,在所有情况下,b 都适合 L1 缓存.然而,未命中数显着增加了大约 16 kiB b 内存占用.这可能是意料之中的,因为 a 和 b 的加载会导致从 b 开始的缓存行在这一点上失效.

绝对没有理由将 a 的元素保存在缓存中,它们只会被使用一次.因此,我运行了一个带有 a 数据的非时间加载的程序变体,但未命中的数量没有改变.我还运行了一个对 a 数据进行非临时预取的变体,但结果仍然非常相同.

我的基准代码如下(显示无非时间预取的变体):

int main(int argc, char* argv[]){uint64_t* 一个;const uint64_t a_bytes = 64 * 1024 * 1024;const uint64_t a_count = a_bytes/sizeof(uint64_t);posix_memalign((void**)(&a), 64, a_bytes);uint64_t* b;const uint64_t b_bytes = atol(argv[1]) * 1024;const uint64_t b_count = b_bytes/sizeof(uint64_t);posix_memalign((void**)(&b), 64, b_bytes);__m256i 个 = _mm256_set1_epi64x(1UL);for (long i = 0; i 橙色曲线代表普通负载,它具有预期的形状.蓝色曲线代表在指令前缀中设置了所谓的驱逐提示(EH)的负载,灰色曲线代表了a的每个缓存行被手动驱逐的情况;KNC 启用的这两种技巧显然都像我们想要的那样对超过 16 kiB 的 b 起作用.被测循环代码如下:

while (a_ptr < a_ptr_end) {#ifdef NTLOAD__m512i aa = _mm512_extload_epi64((__m512i*)a_ptr,_MM_UPCONV_EPI64_NONE, _MM_BROADCAST64_NONE, _MM_HINT_NT);#别的__m512i aa = _mm512_load_epi64((__m512i*)a_ptr);#万一__m512i bb = _mm512_load_epi64((__m512i*)b_ptr);bb = _mm512_or_epi64(aa, bb);_mm512_store_epi64((__m512i*)b_ptr, bb);#ifdef 驱逐出境_mm_clevict(a_ptr, _MM_HINT_T0);#万一a_ptr += 8;b_ptr += 8;如果 (b_ptr >= b_ptr_end)b_ptr = b;}

更新 2

在至强融核上,为 a_ptr 的正常负载变体(橙色曲线)预取生成icpc:

400e93: 62 d1 78 08 18 4c 24 vprefetch0 [r12+0x80]

当我手动(通过十六进制编辑可执行文件)将其修改为:

400e93: 62 d1 78 08 18 44 24 vprefetchnta [r12+0x80]

我得到了想要的结果,甚至比蓝/灰曲线还要好.但是,即使在循环之前使用 #pragma prefetch a_ptr:_MM_HINT_NTA ,我也无法强制编译器为我生成非临时预取:(

解决方案 具体回答标题问题:

是,最近1主流英特尔 CPU 支持在普通 2 内存上的非临时加载 - 但仅通过非临时预取指令间接",而不是直接使用像 movntdqa 这样的非临时加载指令.这与非临时存储形成对比,在非临时存储中您可以直接使用相应的非临时存储指令3.

基本思想是在任何正常加载之前向缓存行发出prefetchnta,然后正常发出加载.如果该行尚未在缓存中,它将以非临时方式加载.非时间方式的确切含义取决于体系结构,但一般模式是该行至少加载到 L1 和一些更高的缓存级别.实际上,要使预取有任何用途,它需要使该行至少加载到某个 缓存级别以供以后加载使用.该行也可以在缓存中进行特殊处理,例如将其标记为高优先级以进行驱逐或限制其放置方式.

所有这一切的结果是,虽然在某种意义上支持非时间加载,但它们实际上只是部分非时间性的,不像商店,你真的没有在任何地方留下任何痕迹缓存级别.非临时加载会导致一些缓存污染,但通常少于常规加载.确切的细节是特定于架构的,我在下面包含了现代英特尔的一些细节(你可以在这个答案中找到一个稍长的文章/a>).

Skylake 客户端

根据测试在这个答案中,prefetchnta Skylake 的行为似乎是正常取入 L1 缓存,完全跳过 L2,并以有限的方式取入 L3 缓存(可能只有 1 或 2 路,以便 nta 预取可用的 L3 总量数量有限).

这是在 Skylake 客户端上测试过的,但我相信这一点基本行为可能向后扩展到 Sandy Bridge 和更早版本(基于 Intel 优化指南中的措辞),并且还转发到 Kaby Lake 和基于 Skylake 客户端的更高版本架构.因此,除非您使用的是 Skylake-SP 或 Skylake-X 部件,或者非常旧的 CPU,否则这可能是您可以从 prefetchnta 中获得的行为.

天湖服务器

已知唯一具有不同行为的最新英特尔芯片是 Skylake 服务器(用于 Skylake-X、Skylake-SP 和其他几行).这对 L2 和 L3 架构进行了相当大的更改,并且 L3 不再包含更大的 L2.对于这个芯片,似乎prefetchnta都跳过了L2和L3缓存,所以在这个架构上缓存污染仅限于L1.

此行为是用户 Mysticial 在评论.不利的一面,正如这些评论中所指出的那样,这会使 prefetchnta 变得更加脆弱:如果您获得了预取距离或时间错误(当涉及超线程并且兄弟核心处于活动状态时尤其容易),并且数据在您使用之前从 L1 中被逐出,您将一直返回到主内存而不是早期架构中的 L3.

<小时>1 Recent 这里可能意味着过去十年左右的任何事情,但我并不是暗示早期的硬件不支持非临时预取:支持可能可以追溯到 prefetchnta 的引入,但我没有硬件来检查它,也找不到现有的可靠信息来源.

2 Normal这里只是指WB(writeback)内存,也就是绝大多数时间在应用层处理的内存.

3 具体来说,NT 存储指令是用于通用寄存器的 movnti 和 movntd* 和 movntp*> SIMD 寄存器系列.

I am aware of multiple questions on this topic, however, I haven't seen any clear answers nor any benchmark measurements. I thus created a simple program that works with two arrays of integers. The first array a is very large (64 MB) and the second array b is small to fit into L1 cache. The program iterates over a and adds its elements to corresponding elements of b in a modular sense (when the end of b is reached, the program starts from its beginning again). The measured numbers of L1 cache misses for different sizes of b is as follows:

The measurements were made on a Xeon E5 2680v3 Haswell type CPU with 32 kiB L1 data cache. Therefore, in all the cases, b fitted into L1 cache. However, the number of misses grew considerably by around 16 kiB of b memory footprint. This might be expected since the loads of both a and b causes invalidation of cache lines from the beginning of b at this point.

There is absolutely no reason to keep elements of a in cache, they are used only once. I therefore run a program variant with non-temporal loads of a data, but the number of misses did not change. I also run a variant with non-temporal prefetching of a data, but still with the very same results.

My benchmark code is as follows (variant w/o non-temporal prefetching shown):

int main(int argc, char* argv[])

{

uint64_t* a;

const uint64_t a_bytes = 64 * 1024 * 1024;

const uint64_t a_count = a_bytes / sizeof(uint64_t);

posix_memalign((void**)(&a), 64, a_bytes);

uint64_t* b;

const uint64_t b_bytes = atol(argv[1]) * 1024;

const uint64_t b_count = b_bytes / sizeof(uint64_t);

posix_memalign((void**)(&b), 64, b_bytes);

__m256i ones = _mm256_set1_epi64x(1UL);

for (long i = 0; i < a_count; i += 4)

_mm256_stream_si256((__m256i*)(a + i), ones);

// load b into L1 cache

for (long i = 0; i < b_count; i++)

b[i] = 0;

int papi_events[1] = { PAPI_L1_DCM };

long long papi_values[1];

PAPI_start_counters(papi_events, 1);

uint64_t* a_ptr = a;

const uint64_t* a_ptr_end = a + a_count;

uint64_t* b_ptr = b;

const uint64_t* b_ptr_end = b + b_count;

while (a_ptr < a_ptr_end) {

#ifndef NTLOAD

__m256i aa = _mm256_load_si256((__m256i*)a_ptr);

#else

__m256i aa = _mm256_stream_load_si256((__m256i*)a_ptr);

#endif

__m256i bb = _mm256_load_si256((__m256i*)b_ptr);

bb = _mm256_add_epi64(aa, bb);

_mm256_store_si256((__m256i*)b_ptr, bb);

a_ptr += 4;

b_ptr += 4;

if (b_ptr >= b_ptr_end)

b_ptr = b;

}

PAPI_stop_counters(papi_values, 1);

std::cout << "L1 cache misses: " << papi_values[0] << std::endl;

free(a);

free(b);

}

What I wonder is whether CPU vendors support or are going to support non-temporal loads / prefetching or any other way how to label some data as not-being-hold in cache (e.g., to tag them as LRU). There are situations, e.g., in HPC, where similar scenarios are common in practice. For example, in sparse iterative linear solvers / eigensolvers, matrix data are usually very large (larger than cache capacities), but vectors are sometimes small enough to fit into L3 or even L2 cache. Then, we would like to keep them there at all costs. Unfortunately, loading of matrix data can cause invalidation of especially x-vector cache lines, even though in each solver iteration, matrix elements are used only once and there is no reason to keep them in cache after they have been processed.

UPDATE

I just did a similar experiment on an Intel Xeon Phi KNC, while measuring runtime instead of L1 misses (I haven't find a way how to measure them reliably; PAPI and VTune gave weird metrics.) The results are here:

The orange curve represents ordinary loads and it has the expected shape. The blue curve represents loads with so-call eviction hint (EH) set in the instruction prefix and the gray curve represents a case where each cache line of a was manually evicted; both these tricks enabled by KNC obviously worked as we wanted to for b over 16 kiB. The code of the measured loop is as follows:

while (a_ptr < a_ptr_end) {

#ifdef NTLOAD

__m512i aa = _mm512_extload_epi64((__m512i*)a_ptr,

_MM_UPCONV_EPI64_NONE, _MM_BROADCAST64_NONE, _MM_HINT_NT);

#else

__m512i aa = _mm512_load_epi64((__m512i*)a_ptr);

#endif

__m512i bb = _mm512_load_epi64((__m512i*)b_ptr);

bb = _mm512_or_epi64(aa, bb);

_mm512_store_epi64((__m512i*)b_ptr, bb);

#ifdef EVICT

_mm_clevict(a_ptr, _MM_HINT_T0);

#endif

a_ptr += 8;

b_ptr += 8;

if (b_ptr >= b_ptr_end)

b_ptr = b;

}

UPDATE 2

On Xeon Phi, icpc generated for normal-load variant (orange curve) prefetching for a_ptr:

400e93: 62 d1 78 08 18 4c 24 vprefetch0 [r12+0x80]

When I manually (by hex-editing the executable) modified this to:

400e93: 62 d1 78 08 18 44 24 vprefetchnta [r12+0x80]

I got the desired resutls, even better than the blue/gray curves. However, I was not able to force the compiler to generate non-temporal prefetchnig for me, even by using #pragma prefetch a_ptr:_MM_HINT_NTA before the loop :(

解决方案 To answer specifically the headline question:

Yes, recent1 mainstream Intel CPUs support non-temporal loads on normal 2 memory - but only "indirectly" via non-temporal prefetch instructions, rather than directly using non-temporal load instructions like movntdqa. This is in contrast to non-temporal stores where you can just use the corresponding non-temporal store instructions3 directly.

The basic idea is that you issue a prefetchnta to the cache line before any normal loads, and then issue loads as normal. If the line wasn't already in the cache, it will be loaded in a non-temporal fashion. The exact meaning of non-temporal fashion depends on the architecture but the general pattern is that the line is loaded into at least the L1 and perhaps some higher cache levels. Indeed for a prefetch to be of any use it needs to cause the line to loaded at least into some cache level for consumption by a later load. The line may also be treated specially in the cache, for example by flagging it as high priority for eviction or restricting the ways in which it can be placed.

The upshot of all this is that while non-temporal loads are supported in a sense, they are really only partly non-temporal unlike stores where you really leave no trace of the line in any of the cache levels. Non-temporal loads will cause some cache pollution, but generally less than regular loads. The exact details are architecture specific, and I've included some details below for modern Intel (you can find a slightly longer writeup in this answer).

Skylake Client

Based on the tests in this answer it seems that the behavior for prefetchnta Skylake is to fetch normally into the L1 cache, to skip the L2 entirely, and fetches in a limited way into the L3 cache (probably into 1 or 2 ways only so the total amount of the L3 available to nta prefetches is limited).

This was tested on Skylake client, but I believe this basic behavior probably extends backwards probably to Sandy Bridge and earlier (based on wording in the Intel optimization guide), and also forwards to Kaby Lake and later architectures based on Skylake client. So unless you are using a Skylake-SP or Skylake-X part, or an extremely old CPU, this is probably the behavior you can expect from prefetchnta.

Skylake Server

The only recent Intel chip known to have different behavior is Skylake server (used in Skylake-X, Skylake-SP and a few other lines). This has a considerably changed L2 and L3 architecture, and the L3 is no longer inclusive of the much larger L2. For this chip, it seems that prefetchnta skips both the L2 and L3 caches, so on this architecture cache pollution is limited to the L1.

This behavior was reported by user Mysticial in a comment. The downside, as pointed out in those comments is that this makes prefetchnta much more brittle: if you get the prefetch distance or timing wrong (especially easy when hyperthreading is involved and the sibling core is active), and the data gets evicted from L1 before you use, you are going all the way back to main memory rather than the L3 on earlier architectures.

1 Recent here probably means anything in the last decade or so, but I don't mean to imply that earlier hardware didn't support non-temporal prefetch: it's possible that support goes right back to the introduction of prefetchnta but I don't have the hardware to check that and can't find an existing reliable source of information on it.

2 Normal here just means WB (writeback) memory, which is the memory dealing with at the application level the overwhelming majority of the time.

3 Specifically, the NT store instructions are movnti for general purpose registers and the movntd* and movntp* families for SIMD registers.

这篇关于当前的 x86 架构是否支持非临时加载(来自“正常"内存)?的文章就介绍到这了,希望我们推荐的答案对大家有所帮助,也希望大家多多支持编程学习网!

本文标题为:当前的 x86 架构是否支持非临时加载(来自“正常"内存)?

- 从python回调到c++的选项 2022-11-16

- 与 int by int 相比,为什么执行 float by float 矩阵乘法更快? 2021-01-01

- STL 中有 dereference_iterator 吗? 2022-01-01

- Stroustrup 的 Simple_window.h 2022-01-01

- 一起使用 MPI 和 OpenCV 时出现分段错误 2022-01-01

- 如何对自定义类的向量使用std::find()? 2022-11-07

- 近似搜索的工作原理 2021-01-01

- C++ 协变模板 2021-01-01

- 静态初始化顺序失败 2022-01-01

- 使用/clr 时出现 LNK2022 错误 2022-01-01