Atomic double floating point or SSE/AVX vector load/store on x86_64(x86_64 上的原子双浮点或 SSE/AVX 矢量加载/存储)

问题描述

这里(以及一些 SO 问题)我看到 C++ 没有不支持无锁 std::atomic

Here (and in a few SO questions) I see that C++ doesn't support something like lock-free std::atomic<double> and can't yet support something like atomic AVX/SSE vector because it's CPU-dependent (though nowadays of CPUs I know, ARM, AArch64 and x86_64 have vectors).

但是在 x86_64 中是否有对 double 或向量的原子操作的汇编级支持?如果是,支持哪些操作(例如加载、存储、加、减、乘)?MSVC++2017在atomic中实现了哪些操作无锁?

But is there assembly-level support for atomic operations on doubles or vectors in x86_64? If so, which operations are supported (like load, store, add, subtract, multiply maybe)? Which operations does MSVC++2017 implement lock-free in atomic<double>?

推荐答案

C++ 不支持类似无锁的

std::atomic

实际上,C++11 std::atomic<double> 在典型的 C++ 实现上是无锁的,并且确实公开了您在 asm 中可以使用 float/double 在 x86 上(例如加载、存储和 CAS 足以实现任何东西:为什么没有完全实现原子双精度).不过,当前的编译器并不总是能有效地编译 atomic.

Actually, C++11 std::atomic<double> is lock-free on typical C++ implementations, and does expose nearly everything you can do in asm for lock-free programming with float/double on x86 (e.g. load, store, and CAS are enough to implement anything: Why isn't atomic double fully implemented). Current compilers don't always compile atomic<double> efficiently, though.

C++11 std::atomic 没有用于 英特尔的事务内存扩展 (TSX) 的 API)(用于 FP 或整数).TSX 可能会改变游戏规则,尤其是对于 FP/SIMD,因为它将消除 xmm 和整数寄存器之间的所有反弹数据开销.如果事务没有中止,无论您对双倍或向量加载/存储所做的任何事情都会自动发生.

C++11 std::atomic doesn't have an API for Intel's transactional-memory extensions (TSX) (for FP or integer). TSX could be a game-changer especially for FP / SIMD, since it would remove all overhead of bouncing data between xmm and integer registers. If the transaction doesn't abort, whatever you just did with double or vector loads/stores happens atomically.

一些非 x86 硬件支持 float/double 和 C++ 的原子添加 p0020 是向 C++ 的 fetch_add 和 operator+=/-= 模板特化添加的提议code>std::atomic

Some non-x86 hardware supports atomic add for float/double, and C++ p0020 is a proposal to add fetch_add and operator+= / -= template specializations to C++'s std::atomic<float> / <double>.

具有 LL/SC 原子而不是 x86 样式内存的硬件-目标指令,例如ARM和大多数其他RISC CPU,可以在没有CAS的情况下对double和float进行原子RMW操作,但您仍然必须从FP获取数据到整数寄存器,因为 LL/SC 通常只适用于整数寄存器,比如 x86 的 cmpxchg.但是,如果硬件仲裁 LL/SC 对以避免/减少活锁,那么在非常高的竞争情况下,它会比使用 CAS 循环更有效.如果您设计的算法很少发生争用,那么 fetch_add 的 LL/add/SC 重试循环与加载 + 添加 + LL/SC CAS 重试循环之间可能只有很小的代码大小差异.

Hardware with LL/SC atomics instead of x86-style memory-destination instruction, such as ARM and most other RISC CPUs, can do atomic RMW operations on double and float without a CAS, but you still have to get the data from FP to integer registers because LL/SC is usually only available for integer regs, like x86's cmpxchg. However, if the hardware arbitrates LL/SC pairs to avoid/reduce livelock, it would be significantly more efficient than with a CAS loop in very-high-contention situations. If you've designed your algorithms so contention is rare, there's maybe only a small code-size difference between an LL/add/SC retry-loop for fetch_add vs. a load + add + LL/SC CAS retry loop.

x86 自然对齐的加载和存储是原子的最多 8 个字节,甚至 x87 或 SSE.(例如 movsd xmm0, [some_variable] 是原子的,即使在 32 位模式下也是如此).实际上,gcc 使用 x87 fild/fistp 或 SSE 8B 加载/存储来实现 std::atomic 加载和存储在 32-位代码.

x86 natually-aligned loads and stores are atomic up to 8 bytes, even x87 or SSE. (For example movsd xmm0, [some_variable] is atomic, even in 32-bit mode). In fact, gcc uses x87 fild/fistp or SSE 8B loads/stores to implement std::atomic<int64_t> load and store in 32-bit code.

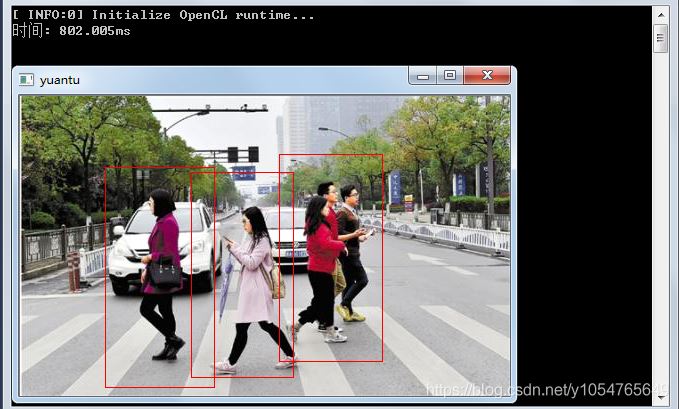

具有讽刺意味的是,编译器(gcc7.1、clang4.0、ICC17、MSVC CL19)在 64 位代码(或 SSE2 可用的 32 位)中做得很糟糕,并且通过整数寄存器来反弹数据,而不仅仅是执行movsd 直接从 xmm regs (在 Godbolt 上查看):

Ironically, compilers (gcc7.1, clang4.0, ICC17, MSVC CL19) do a bad job in 64-bit code (or 32-bit with SSE2 available), and bounce data through integer registers instead of just doing movsd loads/stores directly to/from xmm regs (see it on Godbolt):

#include <atomic>

std::atomic<double> ad;

void store(double x){

ad.store(x, std::memory_order_release);

}

// gcc7.1 -O3 -mtune=intel:

// movq rax, xmm0 # ALU xmm->integer

// mov QWORD PTR ad[rip], rax

// ret

double load(){

return ad.load(std::memory_order_acquire);

}

// mov rax, QWORD PTR ad[rip]

// movq xmm0, rax

// ret

如果没有 -mtune=intel,gcc 喜欢为 integer->xmm 存储/重新加载.参见 https://gcc.gnu.org/bugzilla/show_bug.cgi?id=80820 和我报告的相关错误.即使对于 -mtune=generic,这也是一个糟糕的选择.AMD 对整数和向量寄存器之间的 movq 具有高延迟,但对于存储/重新加载也具有高延迟.使用默认的 -mtune=generic,load() 编译为:

Without -mtune=intel, gcc likes to store/reload for integer->xmm. See https://gcc.gnu.org/bugzilla/show_bug.cgi?id=80820 and related bugs I reported. This is a poor choice even for -mtune=generic. AMD has high latency for movq between integer and vector regs, but it also has high latency for a store/reload. With the default -mtune=generic, load() compiles to:

// mov rax, QWORD PTR ad[rip]

// mov QWORD PTR [rsp-8], rax # store/reload integer->xmm

// movsd xmm0, QWORD PTR [rsp-8]

// ret

在 xmm 和整数寄存器之间移动数据让我们进入下一个主题:

Moving data between xmm and integer register brings us to the next topic:

原子读-修改-写(如fetch_add)是另一回事:有对整数的直接支持,例如lock xadd [mem]、eax(参见对于'int num',num++可以是原子的吗?a> 了解更多详情).对于其他情况,例如 atomic 或 atomic,x86 上的唯一选项是带有 cmpxchg 的重试循环(或多伦多证券交易所).

Atomic read-modify-write (like fetch_add) is another story: there is direct support for integers with stuff like lock xadd [mem], eax (see Can num++ be atomic for 'int num'? for more details). For other things, like atomic<struct> or atomic<double>, the only option on x86 is a retry loop with cmpxchg (or TSX).

原子比较和交换 (CAS) 可用作锁- 任何原子 RMW 操作的免费构建块,最高可达硬件支持的最大 CAS 宽度.在 x86-64 上,使用 cmpxchg16b 是 16 个字节(在某些第一代 AMD K8 上不可用,因此对于 gcc,您必须使用 -mcx16 或 -march=whatever 以启用它).

Atomic compare-and-swap (CAS) is usable as a lock-free building-block for any atomic RMW operation, up to the max hardware-supported CAS width. On x86-64, that's 16 bytes with cmpxchg16b (not available on some first-gen AMD K8, so for gcc you have to use -mcx16 or -march=whatever to enable it).

gcc 为 exchange() 提供了最好的 asm:

gcc makes the best asm possible for exchange():

double exchange(double x) {

return ad.exchange(x); // seq_cst

}

movq rax, xmm0

xchg rax, QWORD PTR ad[rip]

movq xmm0, rax

ret

// in 32-bit code, compiles to a cmpxchg8b retry loop

void atomic_add1() {

// ad += 1.0; // not supported

// ad.fetch_or(-0.0); // not supported

// have to implement the CAS loop ourselves:

double desired, expected = ad.load(std::memory_order_relaxed);

do {

desired = expected + 1.0;

} while( !ad.compare_exchange_weak(expected, desired) ); // seq_cst

}

mov rax, QWORD PTR ad[rip]

movsd xmm1, QWORD PTR .LC0[rip]

mov QWORD PTR [rsp-8], rax # useless store

movq xmm0, rax

mov rax, QWORD PTR [rsp-8] # and reload

.L8:

addsd xmm0, xmm1

movq rdx, xmm0

lock cmpxchg QWORD PTR ad[rip], rdx

je .L5

mov QWORD PTR [rsp-8], rax

movsd xmm0, QWORD PTR [rsp-8]

jmp .L8

.L5:

ret

compare_exchange 总是按位比较,因此您无需担心负零 (-0.0) 比较等于 +0.0 的事实 在 IEEE 语义中,或者 NaN 是无序的.但是,如果您尝试检查 desired == expected 并跳过 CAS 操作,这可能是一个问题.对于足够新的编译器,memcmp(&expected, &desired, sizeof(double)) == 0 可能是在 C++ 中表达 FP 值按位比较的好方法.只要确保避免误报;漏报只会导致不需要的 CAS.

compare_exchange always does a bitwise comparison, so you don't need to worry about the fact that negative zero (-0.0) compares equal to +0.0 in IEEE semantics, or that NaN is unordered. This could be an issue if you try to check that desired == expected and skip the CAS operation, though. For new enough compilers, memcmp(&expected, &desired, sizeof(double)) == 0 might be a good way to express a bitwise comparison of FP values in C++. Just make sure you avoid false positives; false negatives will just lead to an unneeded CAS.

硬件仲裁lock or [mem], 1 绝对比在 lock cmpxchg 重试循环上旋转多个线程要好.每次内核访问缓存行但失败时,它的 cmpxchg 与整数内存目标操作相比浪费了吞吐量,而整数内存目标操作一旦获得缓存行就总是成功.

Hardware-arbitrated lock or [mem], 1 is definitely better than having multiple threads spinning on lock cmpxchg retry loops. Every time a core gets access to the cache line but fails its cmpxchg is wasted throughput compared to integer memory-destination operations that always succeed once they get their hands on a cache line.

IEEE 浮点数的一些特殊情况可以通过整数运算来实现.例如atomic 的绝对值可以通过 lock 和 [mem], rax 来完成(其中 RAX 具有除符号位设置之外的所有位).或者通过将 1 或运算到符号位来强制浮点/双精度为负.或者用 XOR 切换它的符号.您甚至可以使用 lock add [mem], 1 原子地将其幅度增加 1 ulp.(但前提是你可以确定它不是无穷大开始...... nextafter() 是一个有趣的函数,这要归功于 IEEE754 非常酷的带有偏置指数的设计,这使得从尾数到指数的进位真正起作用.)

Some special cases for IEEE floats can be implemented with integer operations. e.g. absolute value of an atomic<double> could be done with lock and [mem], rax (where RAX has all bits except the sign bit set). Or force a float / double to be negative by ORing a 1 into the sign bit. Or toggle its sign with XOR. You could even atomically increase its magnitude by 1 ulp with lock add [mem], 1. (But only if you can be sure it wasn't infinity to start with... nextafter() is an interesting function, thanks to the very cool design of IEEE754 with biased exponents that makes carry from mantissa into exponent actually work.)

可能无法在 C++ 中表达这一点,让编译器在使用 IEEE FP 的目标上为您完成.因此,如果您需要它,您可能必须自己对 atomic<uint64_t> 或其他东西进行类型双关,并检查 FP 字节序是否与整数字节序匹配,等等(或者只是这样做仅适用于 x86.大多数其他目标都有 LL/SC 而不是内存目标锁定操作.)

There's probably no way to express this in C++ that will let compilers do it for you on targets that use IEEE FP. So if you want it, you might have to do it yourself with type-punning to atomic<uint64_t> or something, and check that FP endianness matches integer endianness, etc. etc. (Or just do it only for x86. Most other targets have LL/SC instead of memory-destination locked operations anyway.)

尚不能支持诸如原子 AVX/SSE 向量之类的东西,因为它依赖于 CPU

正确.无法通过缓存一致性系统检测 128b 或 256b 存储或加载何时是原子的.(https://gcc.gnu.org/bugzilla/show_bug.cgi?id=70490).当通过窄协议在缓存之间传输缓存行时,即使是在 L1D 和执行单元之间进行原子传输的系统也可能在 8B 块之间撕裂.真实示例:多路 Opteron具有 HyperTransport 互连的 K10 似乎在单个套接字内具有原子 16B 加载/存储,但不同套接字上的线程可以观察到撕裂.

Correct. There's no way to detect when a 128b or 256b store or load is atomic all the way through the cache-coherency system. (https://gcc.gnu.org/bugzilla/show_bug.cgi?id=70490). Even a system with atomic transfers between L1D and execution units can get tearing between 8B chunks when transferring cache-lines between caches over a narrow protocol. Real example: a multi-socket Opteron K10 with HyperTransport interconnects appears to have atomic 16B loads/stores within a single socket, but threads on different sockets can observe tearing.

但是如果你有一个共享的对齐的 double 数组,你应该能够对它们使用向量加载/存储,而不会在任何给定的 double.

But if you have a shared array of aligned doubles, you should be able to use vector loads/stores on them without risk of "tearing" inside any given double.

矢量加载/存储的每元素原子性并收集/分散?

我认为可以安全地假设对齐的 32B 加载/存储是通过不重叠的 8B 或更宽的加载/存储完成的,尽管英特尔不保证这一点.对于未结盟的行动,假设任何事情可能都不安全.

I think it's safe to assume that an aligned 32B load/store is done with non-overlapping 8B or wider loads/stores, although Intel doesn't guarantee that. For unaligned ops, it's probably not safe to assume anything.

如果您需要 16B 原子负载,您唯一的选择是lock cmpxchg16b,使用 desired=expected.如果成功,它将用自身替换现有值.如果失败,那么您将获得旧内容.(极端情况:这个加载"在只读内存上出错,所以要小心你传递给执行此操作的函数的指针.)此外,与可以离开的实际只读加载相比,性能当然是可怕的处于共享状态的缓存行,这不是完整的内存屏障.

If you need a 16B atomic load, your only option is to lock cmpxchg16b, with desired=expected. If it succeeds, it replaces the existing value with itself. If it fails, then you get the old contents. (Corner-case: this "load" faults on read-only memory, so be careful what pointers you pass to a function that does this.) Also, the performance is of course horrible compared to actual read-only loads that can leave the cache line in Shared state, and that aren't full memory barriers.

16B 原子存储和 RMW 都可以使用 lock cmpxchg16b 显而易见的方式.这使得纯存储比常规向量存储昂贵得多,特别是如果 cmpxchg16b 必须重试多次,但原子 RMW 已经很昂贵了.

16B atomic store and RMW can both use lock cmpxchg16b the obvious way. This makes pure stores much more expensive than regular vector stores, especially if the cmpxchg16b has to retry multiple times, but atomic RMW is already expensive.

与lock cmpxchg16b相比,将向量数据移入/移出整数reg的额外指令不是免费的,但也不昂贵.

The extra instructions to move vector data to/from integer regs are not free, but also not expensive compared to lock cmpxchg16b.

# xmm0 -> rdx:rax, using SSE4

movq rax, xmm0

pextrq rdx, xmm0, 1

# rdx:rax -> xmm0, again using SSE4

movq xmm0, rax

pinsrq xmm0, rdx, 1

在 C++11 术语中:

In C++11 terms:

atomic<__m128d> 即使对于只读或只写操作(使用 cmpxchg16b)也会很慢,即使实现最佳.atomic<__m256d> 甚至不能是无锁的.

atomic<__m128d> would be slow even for read-only or write-only operations (using cmpxchg16b), even if implemented optimally. atomic<__m256d> can't even be lock-free.

alignas(64) atomic 理论上仍然允许对读取或写入它的代码进行自动向量化,只需要 movq rax, xmm0 然后 xchg 或cmpxchg 用于 double 上的原子 RMW.(在 32 位模式下,cmpxchg8b 可以工作.)不过,您几乎肯定不会从编译器那里获得好的 asm!

alignas(64) atomic<double> shared_buffer[1024]; would in theory still allow auto-vectorization for code that reads or writes it, only needing to movq rax, xmm0 and then xchg or cmpxchg for atomic RMW on a double. (In 32-bit mode, cmpxchg8b would work.) You would almost certainly not get good asm from a compiler for this, though!

您可以原子地更新一个 16B 的对象,但原子地单独读取 8B 的一半.(我认为这对于 x86 上的内存排序是安全的:在 https://gcc.gnu.org/bugzilla/show_bug.cgi?id=80835).

You can atomically update a 16B object, but atomically read the 8B halves separately. (I think this is safe with respect to memory-ordering on x86: see my reasoning at https://gcc.gnu.org/bugzilla/show_bug.cgi?id=80835).

然而,编译器没有提供任何干净的方式来表达这一点.我编写了一个适用于 gcc/clang 的联合类型双关语:如何使用 c++11 CAS 实现 ABA 计数器?.但是 gcc7 和更高版本不会内联 cmpxchg16b,因为他们正在重新考虑 16B 对象是否应该真正将自己呈现为无锁".(https://gcc.gnu.org/ml/gcc-补丁/2017-01/msg02344.html).

However, compilers don't provide any clean way to express this. I hacked up a union type-punning thing that works for gcc/clang: How can I implement ABA counter with c++11 CAS?. But gcc7 and later won't inline cmpxchg16b, because they're re-considering whether 16B objects should really present themselves as "lock-free". (https://gcc.gnu.org/ml/gcc-patches/2017-01/msg02344.html).

这篇关于x86_64 上的原子双浮点或 SSE/AVX 矢量加载/存储的文章就介绍到这了,希望我们推荐的答案对大家有所帮助,也希望大家多多支持编程学习网!

本文标题为:x86_64 上的原子双浮点或 SSE/AVX 矢量加载/存储

- 使用/clr 时出现 LNK2022 错误 2022-01-01

- 与 int by int 相比,为什么执行 float by float 矩阵乘法更快? 2021-01-01

- Stroustrup 的 Simple_window.h 2022-01-01

- 一起使用 MPI 和 OpenCV 时出现分段错误 2022-01-01

- STL 中有 dereference_iterator 吗? 2022-01-01

- 静态初始化顺序失败 2022-01-01

- 如何对自定义类的向量使用std::find()? 2022-11-07

- 从python回调到c++的选项 2022-11-16

- C++ 协变模板 2021-01-01

- 近似搜索的工作原理 2021-01-01